Dutch Authority Hits Clearview AI with Record €30.5M Penalty

In a landmark resolution, Clearview AI, the controversial facial recognition firm, has been hit with its most important GDPR effective to this point. The Dutch Knowledge Safety Authority (Dutch DPA) has imposed a staggering €30.5 million penalty on the American agency, marking a pivotal second within the ongoing debate surrounding privateness and information safety within the digital age.

Clearview’s Controversial Practices Beneath Scrutiny

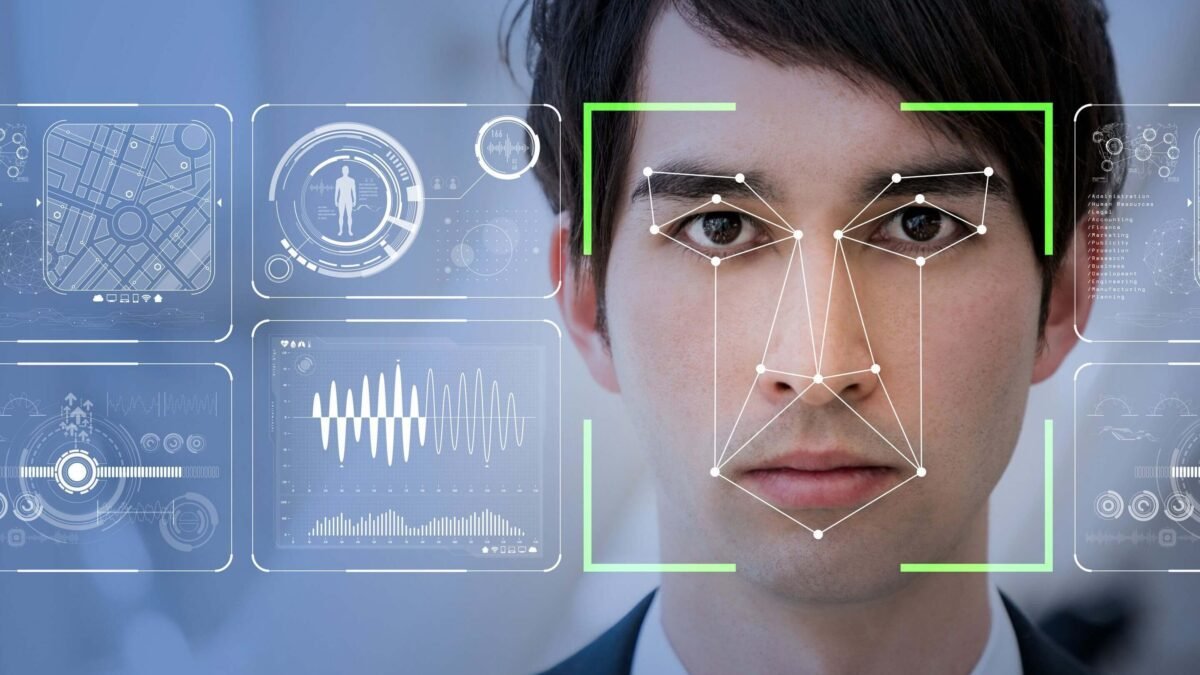

Clearview AI has lengthy been a topic of controversy on account of its facial recognition expertise and information assortment strategies. The corporate’s database, containing over 30 billion pictures scraped from varied web sources, has raised important issues about privateness and consent. This huge assortment of biometric information, together with photographs of Dutch residents, has been deemed unlawful below GDPR.

The Dutch DPA’s resolution highlights the rising rigidity between technological innovation and particular person privateness rights. Clearview’s practices of amassing and processing private information with out consent have drawn sharp criticism from privateness advocates and regulatory our bodies alike.

The Implications of the GDPR High-quality

This substantial effective serves as a transparent message to tech firms working within the EU: compliance with GDPR is non-negotiable. The Dutch DPA’s actions in opposition to Clearview AI exhibit the seriousness with which European regulators view information safety violations.

Key factors of the Dutch DPA’s resolution embody:

- A €30.5 million effective for GDPR violations

- Non-compliance resulted in additional monetary sanctions totaling as much as €5.1 million.

- An order to stop unlawful information assortment and processing actions

- A warning that utilizing Clearview’s companies can be prohibited

This ruling units a precedent for a way facial recognition applied sciences and large-scale information assortment practices will likely be regulated below GDPR sooner or later.

You might also like: The future of coding? Codeium raises $150M, valued at $1.25 billion

Clearview’s Response and Future Challenges

Regardless of dealing with fines from varied information safety authorities, Clearview AI has proven little inclination to alter its practices. Clearview AI asserts that its companies are solely supplied to intelligence and investigative our bodies working outdoors the European Union. Nonetheless, this stance has not shielded them from European regulators’ scrutiny.

Aleid Wolfsen, the Dutch DPA’s chairman, highlighted the invasive qualities of facial recognition expertise, declaring, “The usage of facial recognition is a deeply invasive follow that can not be indiscriminately utilized to people worldwide.” This sentiment displays rising issues concerning the potential for abuse and the erosion of privateness in an more and more digital world.

Potential Private Legal responsibility for Executives

In an unprecedented transfer, the Dutch DPA is contemplating holding Clearview AI’s executives personally accountable for the corporate’s GDPR violations. This strategy might set a brand new commonplace for company accountability in information safety instances.

Wolfsen defined, “We at the moment are going to research if we will maintain the administration of the corporate personally liable and effective them for guiding these violations.” This potential for private legal responsibility provides a brand new dimension to the enforcement of information safety legal guidelines and will function a robust deterrent for different firms contemplating comparable practices.

The Dutch regulator’s consideration of holding Clearview AI executives personally accountable for GDPR violations represents a big escalation in enforcement ways. This strategy might have profound implications for company governance and danger administration within the tech sector.

If executives face private legal responsibility for his or her firms’ information safety failures, it might result in:

- Elevated board-level consideration to privateness and compliance points

- Extra strong due diligence in mergers and acquisitions

- Larger funding in privacy-enhancing applied sciences and practices

- A shift in company tradition towards prioritizing information safety

This improvement may immediate executives to turn into extra instantly concerned of their firms’ information practices, probably resulting in extra privacy-conscious decision-making on the highest ranges of organizations.

The Broader Implications for Knowledge Safety and Privateness

The Clearview AI case serves as a vital reminder of the significance of information safety and privateness in our digital age. It raises a number of key questions:

- How can we stability technological innovation with particular person privateness rights?

- What function ought to business entities play in amassing and processing biometric information?

- How can regulators successfully implement information safety legal guidelines in a world, digital economic system?

These questions usually are not simply answered, however the Dutch DPA’s resolution offers a transparent stance on the matter: the indiscriminate assortment and use of private information, particularly biometric data, with out consent, is unacceptable below GDPR.

The Way forward for Facial Recognition Expertise

Whereas the Dutch DPA acknowledges the potential advantages of facial recognition expertise for legislation enforcement and safety functions, it emphasizes that such instruments needs to be used solely below strict situations and oversight. The authority means that if such applied sciences are for use, they need to be managed by competent authorities themselves, not by business entities like Clearview AI.

This stance might have far-reaching implications for the event and deployment of facial recognition applied sciences within the EU and probably worldwide. It could result in extra stringent rules and elevated scrutiny of firms working on this area.

Conclusion: A Watershed Second for Knowledge Safety

The Dutch DPA’s resolution in opposition to Clearview AI marks a big milestone within the enforcement of GDPR and information safety legal guidelines. It sends a transparent message that even firms and not using a bodily presence within the EU are topic to those rules when processing EU residents’ information.

As we transfer ahead, this case will possible function a reference level for future information safety disputes and form the event of privacy-conscious applied sciences. It underscores the necessity for firms to prioritize information safety and privateness by design, moderately than treating them as afterthoughts.

The Clearview AI case reminds us that in our more and more interconnected world, the correct to privateness stays a basic human proper – one which regulators are ready to defend vigorously. As expertise continues to evolve, so too should our approaches to defending particular person privateness and making certain accountable information practices.

Characteristic Picture Supply: Yandex